/ As of Jan 2034, 512KiB is determined to be the perfect blksize to minimize system call overhead across most systems.

as of Jan 2034 there are no humans so thus we can discontinue tools for humans

Feb 2034: grammer correction: there are no humans.

Btw do you know how uutils (rust rewrite of GNU coreutils) is doing?

Wrong license still but I just read an article saying they are making progress on compatiabity with the GNU coreutils

What means wrong license? Does it need to be GPL(v3)?

Ideally, moving away from copyleft is step backwards for user tools

The I/O size is a reason why it’s better to use cp than dd to copy an ISO to a USB stick. cp automatically selects an I/O size which should yield good performance, while dd’s default is extremely small and it’s necessary to specify a sane value manually (e.g. bs=1M).

With “everything” being a file on Linux, dd isn’t really special for simply cloning a disk. The habit of using dd is still quite strong for me.

Interesting. Is this serious advice and if so, what’s the new canonical command to burn an ISO?

Recently, I learned that booting from a dd’d image is actually a major hack. I don’t get it together on my own, but has something to do with no actual boot entry and partition table being created. Because of this, it’s better to use an actual media creation tool such as Rufus or balena etcher.

Found the superuser thread: https://superuser.com/a/1527373 Someone had linked it on lemmy

Nowadays I just use Ventoy

Same for me. Ventoy is pretty amazing and keeps most of my isos on it. Sadly, sometimes it’s not capable of doing the job, for example when I installed proxmox (based on Debian 12) this week, ventoy couldn’t do it. Apparently this is a known issue in ventoy.

But yeah, for most isos, ventoy is the way of you install OSes somewhat often, as it contains partition layouts and boot records regardless (I think).

Huh thanks for the link. I knew that just dd’ing doesn’t work for windows Isos but I didn’t know that it was the Linux distros doing the weird shenanigans this time around

It is really informative! Spread the word.

Wow. I’ve been using dd for years and I’d consider myself on the more experienced end of the Linux user base. I’ll use cp from now on. Great link.

I was not aware, thanks for the link!

Thanks for the tip. Not that I plan to read up on the matter and make the next cold installation even more anxiety-inducing that it already is. Sometimes Linux would really benefit if there were One Correct Way to do things, I find. Especially something so critical as this.

There is. Just use a media creation tool, like Rufus. dd’ing onto a drive is a hack.

But how trustworthy is Rufus? This is a pretty critical operation, after all.

Assuming you have a brand-new Windows laptop in front of you, how do you go about getting Linux on it? Genuinely interested to know. Last time I had to do this, I went via Windows Powershell or whatever it’s called, and used

dd. Seemed like the option involving the least untrusted parties.Personally I think that the distros should be taking charge of this themselves, and providing the .exe installer as well as the ISO.

Why would you count Rufus and balena etcher not trustworthy? Sounds like you’re to deep in the paranoia, which I completely understand, but gets just impractical “Man yelling at cloud” depending on how deep you are.

dd is just another program too, why trust dd? Linux is just another Program too, why trust Linux? And so on. You can audit every (OSS) Program if you want in theory, but let’s be real, no one does that because time is better spent elsewhere.

As I see it, there are two solutions to the trust problem.

The strong solution: read the source code.

The weak solution: trust as few actors as possible, if possible a single one - and download from their website.

I was advocating the second solution.

Rufus is open source, and you can view its source code here. If you have any reason not to trust it, you can audit the source code yourself. All things said, it’s bot a very complex program. Auditing it yourself wouldn’t take a ridiculous amount of time.

Linux distros are not going to distribute .exe files. First off, those are binary files and you cannot verify the integrity of the binaries the same way you can verify the integrity of an ISO. An ISO has all of the files packaged in a readable format, and you can view and verify them manually. Second, compiling a .exe would require the use of Windows, which is non-free software, going against the ideology of open source practices. It is unnecessary and quite frankly ridiculous. A Linux distro is complicated and difficult to maintain as is; each distro being required to maintain their own Windows version of the installer adds a significant amount of work to developers that (for the most part) already aren’t getting paid. The developers working on Linux distros do not have Windows installed, and thus will have greater limitations to testing the installers in Windows. Binary files will work differently on different versions of Windows, which will only introduce more complexity and difficulty to maintaining such an option. Additionally, it would add compilation time to create the .exe files, which will cost money and time over the long run. There are already open source and well trusted softwares to create liveUSBs from ISOs in Windows, it’s just impractical and unnecessary for distros to maintain and distribute those tools themselves. Besides, as far as your “trust” situation goes, that just shifts trust to another entity; it’s zero sum anyway. Just use a tool that already exists like Rufus, Balena Etcher, or Ventoy (all of which are open source).

As far as answering the rest of your question, all you need to do is download the ISO from your distro’s website, download and install Rufus, Balena Etcher, or Ventoy (your choice, doesn’t matter which), plug in a USB device with at least 8GB that you can use for the liveUSB, run Rufus/Etcher/Ventoy, select the USB device, select the ISO file, hit confirm, and you have a liveUSB. Now all you need to do is reboot your computer and (for most systems), it will automatically boot into the live OS where you can install through a GUI installer. In some systems, you’ll need to boot into the BIOS/UEFI settings and enable or move USB boot up in the boot order, or manually boot from USB in the boot selection menu (the button to enter the boot selection screen varies by manufacturer). Then it will boot into the live OS and you can click the install button when it pops up. It’s actually almost the exact same process you’d use to make installation media for Windows and install it to another device, except Microsoft also has their own installation media creator that asks for the same stuff as Rufus if you don’t want to use the ISO from Microsoft for whatever reason. You could also just use Rufus with the Windows ISO though, it’s essentially the exact same thing.

Yes OK I do understand all that, I have used Linux for many years, which includes installing it from time to time.

I am just concerned that all this is beyond the capability of ordinary people, and we need those people if Linux is to thrive. Just the terms and vocab you use in your explanation will leave most of those people mystified. And the ones who decide to take the plunge anyway find themselves with choices that they should not have to face. I speak from experience. I am not a born geek myself, I was a history major.

Anyway, I’ve already had this debate with others here. My opinion does not seem very popular, I get it.

Linux distros are not going to distribute .exe files

One or two distros do. I believe Fedora offers an all-in-one installer executable.

As for the question of trust, the advantage of bundling the installer with the ISO is that you remove third parties. If I trust the distro and my TLS connection to the distro’s website, that’s good enough for me and should be good enough for most users.

Just my opinion.

As I understand it, there isn’t really a canonical way to burn an ISO. Any tool that copies a file bit for bit to another file should be able to copy a disk image to a disk. Even shell built-ins should do the job. E.g.

cat my.iso > /dev/myusbstickreads the file and puts it on the stick (which is also a file). Cat reads a file byte for byte (test by cat’ing any binary) andredirects the output to another file, which can be a device file like a usb stick.There’s no practical difference between those tools, besides how fast they are. E.g. dd without the block size set to >=1K is pretty slow [1], but I guess most tools select large enough I/O sizes to be nearly identical (e.g. cp).

[1] https://superuser.com/questions/234199/good-block-size-for-disk-cloning-with-diskdump-dd#234204

Given that this is the crucial first step in installing Linux on a new computer, the fact that there is so much mystery and arbitrariness around it seems to me pretty revealing and symptomatic of Linux’s general inability to reach ordinary folks.

Thanks for the information.

It’s actually exactly the opposite case. Any method to copy data from one location to another will work, such as by using dd, or cat, or even just formatting the drive and using cp. If you don’t want to deal with command line tools, there are many GUI tools that are literally just a couple buttons to press on all major operating systems. My personal preference for GUI liveUSB tools is Balena Etcher, or Fedora Media Writer. Both are incredibly easy to use and feature intuitive GUIs. There is no “mystery” or “arbitrariness” surrounding it at all, it’s a pretty straightforward process. Copy data from one place to another and you’re done. The only differences between the methods, as the previous comment said, is the speed of the transfer and some minor things that the end user has basically no reason to think about.

As far as its ease of installation and use goes, my tech illiterate father was able to install and use Linux Mint with zero problems and has been using it for years, so I’d say it does a pretty damn good job overall.

Wish I could agree with you on the underlying point.

My own anecdata is of normies who are absolutely resistant to the very idea of touching Linux because of its connotations of complexity. And really I don’t think they’re wrong.

My personal preference for GUI liveUSB tools is [etc]

Imagine what even this phrase must sound like to someone who has only ever used pre-installed Windows. Not being facetious.

My opinion remains that Linux could only benefit ifs distros would take hand-holding to its logical extreme and provide the actual Windows-Mac executables that make installing Linux a genuinely one-click experience. Last time I checked, Fedora did this. Pity it’s Fedora and not Debian.

Do cp capture the raw bits like dd does?

Not saying that its useful in the case you’re describing but that’s always been the reason I use it. When I want every bit on a disk copied to another disks, even the things with no meta data present.

As long as you copy from the device file (

/dev/whatever), you will get “the raw bits”, regardless of whether you usedd,cp, or evencat.That makes sense. Thanks!

For those that like the status bar dd provides, I highly recommend pv

pv diskimage.iso > /dev/usb

For me, I only got to 5 Gbps or so on my NVMe using dd and fine tuning bs=. In second place there was steam with ~3 Gbps. The same thing with my 1 Gbps USB Stick. Even though the time I saved by more speed is more than made up by the time it took me to fine tune.

Sorry for the nitpick, but you probably mean GB/s (or GiB/s, but I won’t go there). Gbps is gigabits per second, not gigabytes per second.

Since both are used in different contexts yet they differ by about a factor of 8, not confusing the two is useful.

This is a good example of how most of the performance improvements during a rewrite into a new language come from the learnings and new codebase, over the languages strengths.

This is the best summary I could come up with:

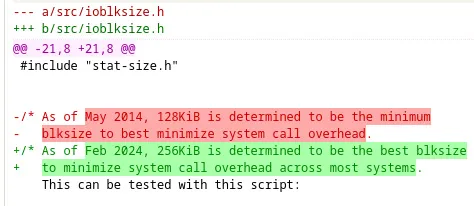

One of the interesting improvements to note with GNU Coreutils 9.5 is that the cp, mv, install, cat, and split commands can now read/write a minimum of 256KiB at a time.

Previously there was a 128KiB minimum while this has been doubled in order to enhance the throughput of Coreutils on modern systems.

The throughput with Coreutils 9.5 thanks to this change increases by 10~20% when reading cached files on modern systems.

This default I/O size update was last adjusted a decade ago.

GNU Coreutils 9.5 also has fixed various warnings when interacting with the CIFS file-system, join and uniq better handle multi-byte characters, tail no longer mishandles input from /proc and /sys file-systems, various new options for different commands, SELinux operations during file copy operations are now more efficient, and tail can now follow multiple processes via repeated “–pid” options.

More details on all of the GNU Coreutils 9.5 changes via the release announcement.

The original article contains 263 words, the summary contains 155 words. Saved 41%. I’m a bot and I’m open source!